- The News: On April 21, 2026, elite white-shoe law firm Sullivan & Cromwell was forced to apologize for submitting a court document containing fake citations generated by artificial intelligence.

- The Cartel's Myth: Until now, the legal establishment has argued that AI errors are the exclusive domain of "sloppy" solo practitioners and public defenders, using this narrative to justify draconian sanctions and ethics rules that keep the technology out of the hands of small firms.

- The Reality: The S&C scandal proves that human oversight is flawed at every level of the profession, including the multi-million-dollar partnerships. The establishment's aggressive gatekeeping is not about accuracy—it is about protecting the billable hour from a technology that makes associative labor obsolete.

- The Stakes: By weaponizing sanctions against AI usage, the legal monopoly is intentionally perpetuating the access-to-justice crisis, ensuring that affordable legal representation remains impossible for the average American.

For the past two years, the American legal establishment has carefully cultivated a specific, self-serving narrative about artificial intelligence. The story, broadcast from the bench in federal courtrooms and codified in state bar ethics opinions, goes like this: Generative AI is a dangerous, unpredictable toy. While it might be tempting for desperate, overworked solo practitioners or underfunded public defenders to use it as a shortcut, it inevitably leads to disaster in the form of "hallucinated" citations and fabricated case law. The solution, according to the cartel, is aggressive gatekeeping—heavy judicial sanctions, public shaming, and draconian regulatory hurdles designed to terrify any small-time lawyer away from utilizing the technology.

This narrative relies on a core, unspoken premise: that the "elite" tiers of the legal profession—the massive, white-shoe law firms with their armies of Ivy League-educated associates and unlimited resources—are fundamentally immune to these errors. The establishment insists that only the "traditional" method of legal research, backed by the exorbitant billing rates of BigLaw, can ensure the integrity of the judicial record.

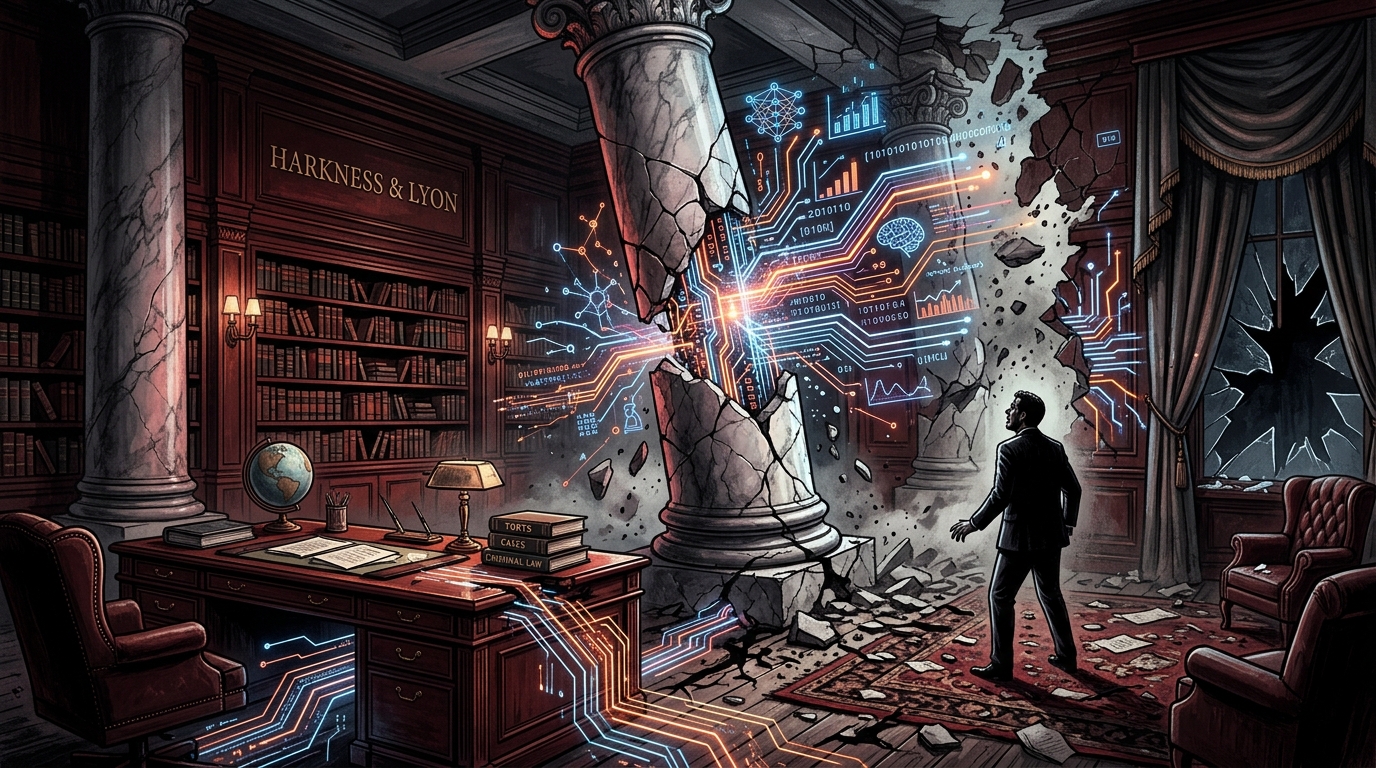

But on April 21, 2026, that entire carefully constructed narrative collapsed overnight. Sullivan & Cromwell, one of the oldest, wealthiest, and most prestigious law firms on the planet, was forced to issue a humiliating public apology for submitting a court filing infested with fake citations created by an AI hallucination. The elite firewall failed. The myth of the infallible white-shoe associate was shattered. And in the process, the true motive behind the legal profession's hysterical anti-AI crusade was laid completely bare.

The Sullivan & Cromwell Revelation

To understand the magnitude of the Sullivan & Cromwell incident, one must understand the firm's place in the legal hierarchy. This is not a strip-mall law office in a mid-sized suburb. This is a Wall Street behemoth that has represented titans of industry, international governments, and the largest financial institutions on earth for over a century. Their associates are drawn from the top five percent of the top five law schools in the country. Their billing rates are astronomical, often exceeding $1,500 an hour for senior partners and $600 an hour for junior associates who are scarcely a year out of law school.

The core value proposition of a firm like Sullivan & Cromwell is flawless execution. Clients pay a massive premium not just for strategic brilliance, but for the guarantee that no stone will be left unturned, no precedent will be missed, and no embarrassing clerical errors will ever reach a judge's desk. The firm employs vast phalanxes of junior lawyers whose sole purpose is to painstakingly review, verify, and Shepardize every single assertion made in every single brief.

And yet, despite this supposedly impenetrable fortress of human oversight, an AI-generated hallucination made it all the way through the gauntlet and into the official judicial record. A machine simply invented a legal precedent, and the highly paid, highly educated humans at one of the world's elite law firms blindly signed off on it.

When solo practitioners make this mistake, the courts and the bar associations react with apoplectic fury. They issue five-figure sanctions. They refer the attorneys to disciplinary committees. They issue press releases warning the public about the dangers of "rogue" lawyers using untested technology. But when Sullivan & Cromwell makes the exact same mistake, the reaction is fundamentally different. There are apologies, yes. There are corrected filings. But the institutional establishment does not view the S&C incident as proof that the firm is fundamentally incompetent. Instead, it is treated as an unfortunate, isolated oversight.

This staggering double standard is the smoking gun. It proves that the legal cartel's war on artificial intelligence has absolutely nothing to do with protecting the integrity of the court, and everything to do with protecting the economic hierarchy of the profession.

The Weaponization of "Hallucinations"

For the past eighteen months, the term "hallucination" has been weaponized by the judiciary to justify a protectionist racket. Judges have seized upon the phenomenon of AI models confidently generating false information as an excuse to implement sweeping, draconian reporting requirements. Federal district courts across the country have instituted standing orders requiring attorneys to explicitly disclose any use of generative AI in their drafting process, and to manually verify every citation under penalty of perjury and severe financial sanctions.

On the surface, this sounds entirely reasonable. Lawyers should, of course, verify their citations. But why are these standing orders applied exclusively to Artificial Intelligence? Human lawyers have been "hallucinating" citations for centuries. Tired associates misread holdings. Desperate litigators stretch the dicta of a case beyond the breaking point to fit a narrative. Senior partners copy and paste arguments from five-year-old briefs without checking to see if the underlying statutes have been amended. The judicial system is awash in human error, laziness, and cognitive bias.

When a human lawyer submits a brief with a bad citation, it is treated as an ordinary part of the adversarial process. The opposing counsel points out the error, the judge ignores the bad law, and the case moves on. There are no mandatory "Human Error Disclosure Forms." There are no automatic $5,000 fines for failing to catch a typo in a Westlaw search.

But when an AI makes an error, the establishment demands a public execution. The reason for this disparity is purely economic. Human error is built into the business model of the law firm. In fact, human inefficiency is the very engine of law firm profitability. But AI efficiency is an existential threat. By hyper-focusing on AI hallucinations, the cartel is intentionally exaggerating the risks of the technology to justify rules that make it too legally perilous for average lawyers to use.

The Billable Hour and the Leverage Machine

To fully grasp why the legal establishment is so terrified of artificial intelligence, one must perform a forensic accounting of how large law firms actually make their money. The traditional law firm operates on a "leverage" model. A partner, who originates the client relationship, does not actually do the vast majority of the legal work. They delegate the document review, the legal research, the drafting of routine motions, and the endless minutiae of discovery to a pyramid of junior associates.

These associates are paid a handsome fixed salary, but they are required to bill upwards of 2,000 hours a year. The firm bills those hours out to the client at a massive markup. The longer a task takes, the more money the firm makes. If an associate spends forty hours manually reviewing a cache of ten thousand emails for privilege, the firm might bill the client $20,000 for that single task. The incentive structure of the modern law firm is fundamentally, irreversibly opposed to efficiency.

Generative AI detonates this model entirely. A sophisticated Large Language Model can ingest those ten thousand emails, analyze them against the legal standard for attorney-client privilege, and output a highly accurate privilege log in thirty seconds. It can draft a competent fifty-page summary judgment motion in the time it takes an associate to walk to the coffee machine. The technology takes tasks that previously required one hundred billable hours and reduces them to three cents of compute time.

If law firms are forced to pass these efficiencies on to their clients—which a free and competitive market would inevitably demand—the leverage model collapses. The pyramid of associates becomes redundant. The massive profit margins that fund the corner offices and the seven-figure partner draws evaporate. AI does not just change how lawyers work; it destroys how lawyers get paid.

This is the true source of the panic. The judges issuing the sanctions, the bar association presidents writing the ethics opinions, the senior partners demanding strict AI bans—they are all protecting the leverage model. They look at AI and they do not see a tool for justice; they see the death of the billable hour.

The Hypocrisy of the Bar Associations

The state bar associations have played a crucial, complicit role in this protectionist racket. In their stated missions, the bar associations exist to protect the public and ensure the ethical practice of law. In reality, they function as the lobbying and enforcement arm of the legal monopoly.

Over the last year, state bars from Florida to New York to California have issued a flurry of ethics opinions regarding the use of generative AI. These opinions are masterclasses in regulatory capture. They cloak their anti-competitive intent in the sanctimonious language of "client confidentiality" and "competent representation." They decree that lawyers must fully understand the "technical backend" of the AI models they use—a standard of technological fluency that has never been applied to any other software in the history of the profession. Does the average lawyer understand the algorithmic sorting mechanisms of LexisNexis? Do they understand the encryption protocols of their Microsoft Outlook servers? Of course not. But suddenly, when it comes to a technology that threatens their pricing power, the bar demands that every attorney become a machine learning engineer before drafting a contract.

Furthermore, these ethics opinions frequently warn of the dangers of "unauthorized practice of law" (UPL) if AI is used to provide direct legal assistance to consumers without a human lawyer acting as a highly paid intermediary. The bar associations are desperate to ensure that they remain the mandatory, monopolistic tollbooth on the road to justice. They do not care if an AI can draft a perfectly sound, legally binding lease agreement for a low-income tenant for free; they only care that a licensed attorney is not getting their $350 cut of the transaction.

The Access to Justice Atrocity

The most enraging, unforgivable aspect of this institutional gatekeeping is the catastrophic impact it has on the American public. We currently exist in a society where over 80 percent of low-income Americans, and a vast majority of the middle class, cannot afford basic civil legal representation. If you are facing eviction, or a predatory debt collector, or a complex child custody battle, and you do not have ten thousand dollars in liquid cash to hand to a lawyer, the legal system is effectively closed to you. You are on your own against a machine designed to crush the unrepresented.

Generative artificial intelligence represents the single greatest opportunity to solve the access-to-justice crisis in the history of modern jurisprudence. The technology has the capability to translate impenetrable legal jargon into plain English. It can help pro se litigants draft competent, procedurally correct pleadings. It can analyze predatory contracts and flag illegal clauses in seconds. It can democratize legal knowledge on a scale previously thought impossible.

But the legal cartel is actively, intentionally suppressing this revolution to protect its own profit margins. By using the threat of severe sanctions and disbarment to terrify lawyers away from experimenting with AI, the courts are ensuring that legal services remain artificially scarce and artificially expensive. The solo practitioner in a rural county who could use AI to double their caseload and cut their hourly rate in half—thereby providing affordable services to hundreds of desperate clients—is paralyzed by the fear of being made a public example by a hostile federal judge.

The establishment's obsession with the supposed "dangers" of AI hallucinations completely ignores the vastly greater danger of millions of Americans facing the legal system with no help at all. A pro se litigant armed with an AI draft that contains one hallucinated citation is still in a vastly better position than a pro se litigant who has no idea how to file a responsive pleading and simply defaults on the case. The cartel pretends to care about the "integrity of the record," but it is perfectly comfortable with a system where the poor are routinely steamrolled because they cannot afford the entry fee.

The Myth of the Artisan Lawyer

At the heart of the legal profession's resistance to technology is a deep-seated, arrogant belief in the "artisan" nature of legal work. Lawyers like to view themselves as bespoke craftsmen, carefully whittling unique legal arguments out of raw intellectual timber. They believe that their "critical analysis" and "strategic judgment" are mystical human qualities that can never be replicated by silicon and code.

This is overwhelmingly false. The vast, overwhelming majority of legal work is not bespoke appellate strategy before the Supreme Court. It is highly repetitive, heavily templated, pattern-matching drudgery. It is reviewing the same standard indemnification clauses in fifty different vendor contracts. It is drafting the same interrogatories in a slip-and-fall case. It is updating the same corporate bylaws for a new LLC. It is, fundamentally, data processing.

Artificial intelligence is exponentially better at data processing than human beings. It does not get tired. It does not get distracted. It does not misread a key sentence because it was out drinking the night before. The Sullivan & Cromwell incident did not happen because AI is inherently worse than human lawyers; it happened because the human lawyers failed to properly prompt, review, and integrate the tool into their workflow.

The artisan myth is a marketing gimmick designed to justify luxury pricing. If the public realizes that 80 percent of what lawyers do is simply moving standardized text from one document to another, the justification for $500-an-hour billing rates evaporates instantly. The cartel is desperately trying to maintain the illusion of bespoke craftsmanship in the face of an industrial revolution.

The Inevitable Collapse of the Monolithic Firm

Despite the best efforts of the federal judiciary and the state bar associations, the wall is going to fall. The economic incentives driving the adoption of artificial intelligence are too massive to be contained by ethics opinions and Rule 11 sanctions. What the current climate of fear and gatekeeping has achieved is not the suppression of the technology, but the creation of a massive shadow economy within the legal profession.

Make no mistake: lawyers across the country are already using these tools every single day. They are just lying about it. They are using AI on their personal laptops to brainstorm case strategy, summarize thousands of pages of medical records, and outline complex appellate briefs. They extract the brilliant insights from the machine, painstakingly scrub the output to remove any "tells" of AI generation, and submit the work as their own, billing the client for the hours they supposedly spent "researching."

This forced deceit is toxic to the profession. It prevents the open development of best practices. It stops lawyers from sharing effective prompt engineering strategies that could minimize hallucinations and improve accuracy. It creates a culture of paranoia in a profession that is supposed to be grounded in candor.

But eventually, the dam will break. The corporate clients—the massive Fortune 500 companies that pay the bills for firms like Sullivan & Cromwell—are not stupid. They are already integrating AI into their own businesses. It is only a matter of time before general counsels begin demanding that their outside law firms use AI to drastically cut costs. When the clients demand efficiency, the law firms will have no choice but to comply, or they will lose the business to a new breed of technologically fluent, highly efficient legal startups.

The True Lesson of Sullivan & Cromwell

The Sullivan & Cromwell hallucination scandal of April 2026 should not be viewed as a warning about the dangers of artificial intelligence. It should be viewed as the final, definitive proof that the legal establishment's anti-AI crusade is built on a foundation of hypocrisy and self-interest.

The elite layers of the profession are not immune to error. The massive armies of junior associates do not guarantee a pristine judicial record. The traditional, wildly expensive methods of practicing law are fundamentally flawed, and the cartel is using its disciplinary power to prevent anyone from building a better, cheaper system.

The sanctions, the ethics opinions, the fearmongering—it is all the death rattle of a monopoly that knows its time is up. The billable hour is dying. The leverage model is obsolete. The artificial scarcity of legal knowledge is ending. The legal scribes can cling to their quills and their gatekeeping rules all they want, but the printing press has arrived, and it is going to rewrite the entire profession.

The Sullivan & Cromwell incident did not prove that AI is too dangerous for the legal profession. It proved that the legal profession is too arrogant, too entrenched, and too reliant on an outdated economic model to manage the inevitable future. The courts and the bar associations can either adapt to that future and use it to finally provide justice to the unrepresented masses, or they can continue to issue sanctions until the market bypasses them entirely.